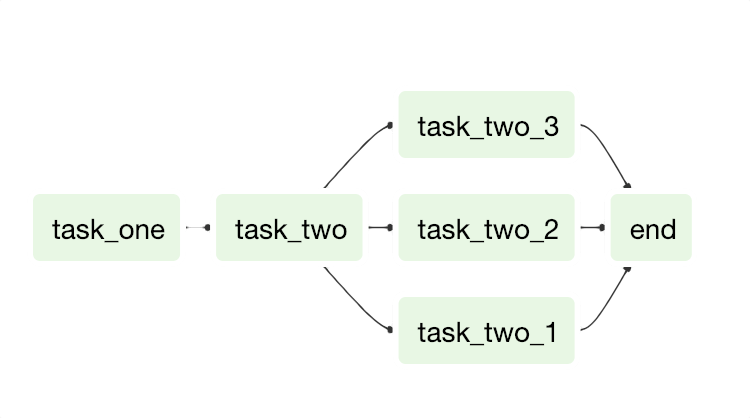

However, this approach was not scalable an end user would download code (either directly from the blog post, or from a corresponding GitHub repository) and manage it themselves. This was certainly a more powerful approach and solved some of the growing pains called out in the initial blog post. In 2020, Astronomer partnered with Updater to release a series of three blog posts on integrating dbt projects into Airflow in a more “Airflow-native” way by parsing dbt’s manifest.json file and constructing an Airflow DAG as part of a CI/CD process. There are also Python dependency conflicts between Airflow and dbt that make getting dbt installed in the same environment very challenging. When the issue is fixed, the entire project has to be restarted, wasting time and compute re-running models that have already been run successfully. When dbt models fail, Airflow doesn’t know why a user has to spend time manually digging through logs and hopping between systems to understand what happened. While this worked, it never felt like a complete solution - with this approach, Airflow has no visibility into what it’s executing, and thus treats the dbt project like a black box. For a long time, Airflow users would use the BashOperator to call the dbt CLI and execute a dbt project. Airflow and dbt: a short historyĭespite the obvious benefit of using dbt to run transformations in Airflow, there has not been a method of running dbt in Airflow that’s become ubiquitous. By using them together, data teams can have the best of both worlds: dbt’s analytics-friendly interfaces and Airflow’s rich support for arbitrary python execution and end-to-end state management of the data pipeline. Data engineers can then take these dbt transformations and schedule them reliably with Airflow, putting the transformations in the context of upstream data ingestion. dbt equips data analysts with the right tool and capabilities to express their transformations. The best part: Airflow and dbt are a match made in heaven. Many data teams have adopted dbt to support their transformation workloads, and dbt’s popularity is fast-growing. And this is for good reason: it offers users a simple, intuitive SQL interface backed by rich functionality and software engineering best practices. In recent years, dbt Core has emerged as a popular transformation tool in the data engineering and analytics communities. Airflow has, and always will, have strong support for each of these data operations through its rich ecosystem of providers and operators.

A typical pipeline is responsible for extracting, transforming, then loading data - this is where the name “ETL” comes from.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed